The Human Intelligence Layer for AI

Human intent signals + Efficient edge processing + Structured intelligence

We Built the Chip First. Everything Else Follows.

Most AI companies build software and hope hardware catches up. We did it differently — we started with a proprietary edge chip designed specifically to capture and structure human eye data in real time. That chip works. It was demonstrated at CES 2026.

This page explains the full technology stack: from raw eye signal to structured AI-ready intelligence.

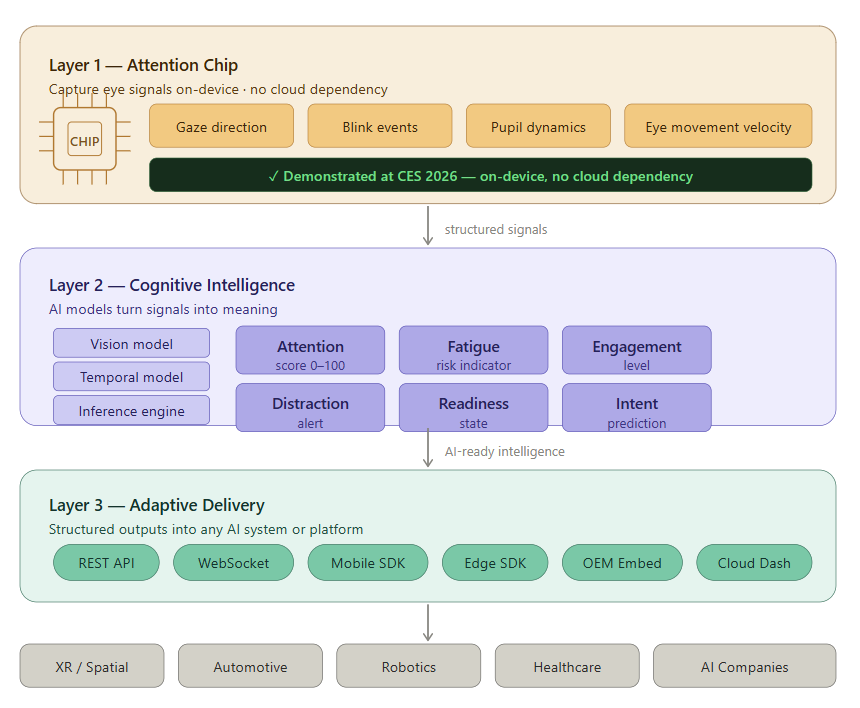

The Three-Layer Stack

1. Layer 1- Structured Signals

Real-Time Human Data Begins at the Source.

Before intelligence is possible, high-quality signals must be captured. Attentix captures human attention signals directly from sensing hardware and converts them into clean, machine-readable data streams at the edge — before any AI model ever sees the data.

This step is where most competitors cut corners. Low-quality or high-latency input data produces unreliable intelligence downstream. Attentix solves this at the hardware level.

Supported Inputs:

- RGB camera input

- IR / depth camera input

- Eye-tracking sensors

- Single-eye or binocular capture

- High-frequency sensor streams

Extracted Signals:

- Gaze direction & fixation points

- Blink rate and blink event detection

- Pupil dynamics and dilation

- Eye movement velocity and saccades

- Attention-state behavioral indicators

Why This Matters Technically

- Structured signals require significantly lower bandwidth than raw video streams

- Reduced preprocessing load enables faster downstream inference

- Privacy-first architecture — no raw facial or video data leaves the device

- Clean structured input means AI models run more accurately and efficiently

2. Layer 2-Cognitive Intent

Signals Become Meaning

Raw signals alone are not useful. What AI systems need is interpreted, structured intelligence — answers to questions like “Is this person paying attention?”, “Are they fatigued?”, “Are they about to act?”

Attentix uses a purpose-built AI model stack to transform low-level eye signals into high-level human-state understanding, in real time.

AI Model Stack:

- Vision models for precise eye-region detection and tracking

- Temporal sequence models for behavioral pattern recognition

- Behavioral inference engine for state classification

- Context fusion layer for multi-signal interpretation

- Edge-optimized quantized models for low-power deployment

Intelligence Outputs Delivered:

- Attention score (continuous 0–100 scale)

- Engagement level (low / medium / high)

- Fatigue risk (onset detection and severity)

- Distraction alert (event-based trigger)

- Readiness state (active / passive / disengaged)

- Intent prediction (pre-action signal)

These outputs are structured, labeled, and immediately consumable by any downstream AI system, application, or platform —no additional model training required on the receiving end.

3. Layer 3-Adaptive Action

Intelligence That Powers Real Systems.

Structured outputs are delivered in real time into applications, devices, and enterprise systems through multiple integration pathways. Attentix is designed to fit into existing AI pipelines with minimal engineering overhead.

Integration Ready:

- REST API (request-response for standard integrations)

- WebSocket streaming (continuous real-time data delivery)

- Mobile SDK (iOS and Android)

- Edge SDK (for embedded and on-device deployment)

- Cloud dashboard integration

- OEM embedded deployment (custom hardware integration)

Target Platforms:

- Snapdragon / Android XR

- NVIDIA Jetson

- x86 Edge Systems

- iOS / Android

- Automotive ECUs

Outcomes:

- Real-time personalization based on user attention state

- Safer automation through continuous human-state monitoring

- Lower infrastructure cost versus cloud-dependent pipelines

- Faster decision cycles with on-device processing

- Scalable deployment across edge, mobile, and embedded environments

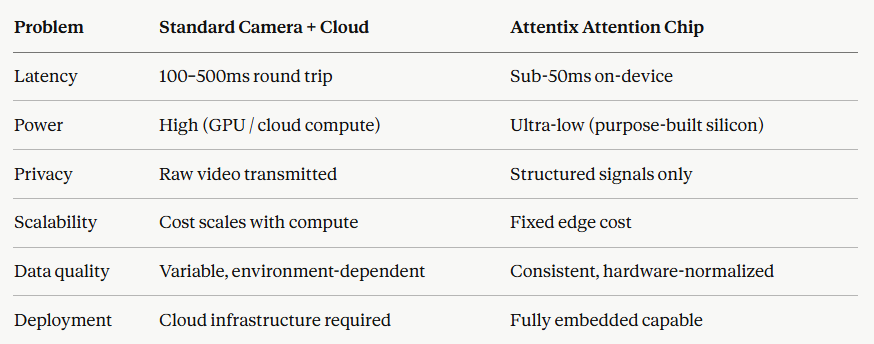

The Attention Chip – Our Hardware Moat

This is the part of Attentix that cannot be easily replicated.

Software-only eye-tracking solutions depend on general-purpose cameras and cloud processing pipelines. They are slow, power-hungry, privacy-risky, and expensive to scale. Attentix is different because we designed and built a dedicated edge chip — the Attention Chip — from the ground up for one purpose: capturing and structuring human eye data as efficiently as possible. Demonstrated live at CES 2026.

- What Is the Attention Chip?

The Attention Chip is a proprietary edge processor designed specifically for real-time human eye-signal capture and on-device structuring. Unlike general-purpose vision chips or repurposed mobile processors, the Attention Chip is architecturally optimized for the specific computational patterns of eye-tracking and attention inference.

It is not a camera. It is not a general vision processor. It is purpose-built silicon for human attention intelligence.

- Key Technical Capabilities

1) On-Chip Processing All signal capture and initial structuring happens directly on the chip. There is no dependency on an external CPU, GPU, or cloud endpoint for core eye-signal processing. This eliminates the primary latency bottleneck in competing solutions.

2) Ultra-Low Power Consumption The chip is designed for continuous always-on operation in power-constrained environments — wearables, XR headsets, automotive embedded systems, and mobile devices. This makes it viable for real-world deployment in a way that GPU-dependent solutions are not.

3) Neuromorphic-Inspired Architecture The chip’s processing architecture is designed to mirror the efficiency of biological visual processing — handling sparse, event-driven signals rather than processing full image frames at high frequency. This is why it achieves low latency and low power simultaneously, without sacrificing signal quality.

4) Privacy-by-Design at the Silicon Level No raw eye images or facial video data leave the chip. Only structured numerical outputs — gaze vectors, blink events, attention scores — are transmitted downstream. Privacy is not a software policy at Attentix. It is enforced by the hardware architecture itself.

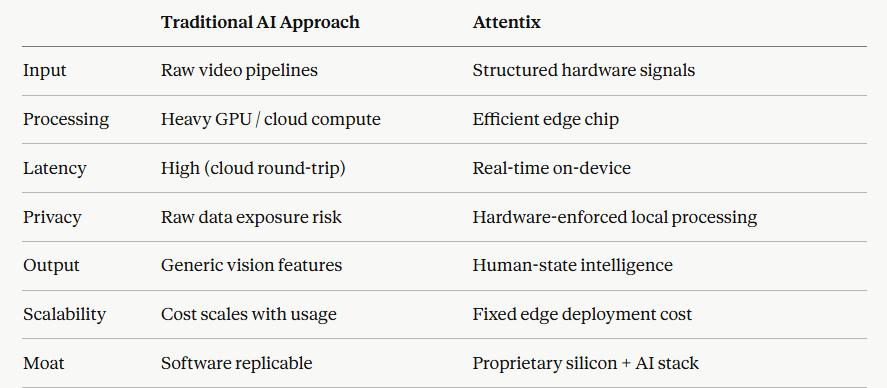

- Why Chip-Level Eye Data Is Superior for AI

Most AI systems that incorporate eye data today rely on one of two approaches: standard RGB cameras with cloud-based processing, or high-cost dedicated eye-tracking hardware designed for research labs.

Both approaches fail in real-world, at-scale AI deployment for the same reasons:

- Key Advantages for AI Systems Integrating Attentix

1) Lower Infrastructure Cost : Replacing cloud video pipelines with structured edge outputs reduces per-unit infrastructure cost significantly — particularly important for AI systems deployed at scale across fleets, devices, or users.

2) Privacy and Security by Design : Regulatory pressure on biometric and behavioral data is increasing globally — EU AI Act, GDPR, CCPA, and emerging automotive and healthcare data regulations. Attentix’s hardware-enforced privacy architecture means AI partners adopt our stack without taking on additional compliance risk.

3) Real-Time AI Response : Attention and fatigue signals are only actionable if they are delivered fast enough for a system to respond. On-device processing eliminates the round-trip latency that makes cloud-based eye intelligence impractical for safety-critical or interactive applications.

4) Consistent, Normalized Data : Because signals are processed through the same hardware pipeline every time, the data is consistent across environments, lighting conditions, and devices. This is critical for AI teams training and fine-tuning models — inconsistent input data is one of the primary causes of poor model generalization.

Why Attentix Wins

Built for the Platforms That Are Reshaping AI

Attentix is designed to integrate with the hardware and software ecosystems where human-machine interaction is becoming critical:

- XR and Spatial Computing — Android XR, Apple Vision Pro, Meta Quest, Snapdragon XR platforms

- Automotive — Driver Monitoring Systems (DMS), ADAS, autonomous vehicle HMI

- Robotics — Human-robot interaction, collaborative robotics, autonomous navigation with human-awareness

- Healthcare — Cognitive assessment, fatigue monitoring, clinical attention tracking

- Enterprise and Productivity — Attention-aware interfaces, focus tracking, adaptive UX

The Technical Defensibility of Attentix

VC and technology partners often ask: what stops a large player from replicating this?

The answer is three-layered:

1) Proprietary Silicon : Designing and validating a purpose-built chip takes years and significant capital. Our Attention Chip is not a software feature — it is physical hardware with a demonstrated working prototype. Replicating it requires starting the silicon design process from scratch.

2) Proprietary Training Data : The AI models that run on and alongside the chip are trained on data generated by the chip itself. This creates a self-reinforcing data advantage: the longer we operate, the more chip-generated eye data we accumulate, and the better our models become. Competitors cannot train on our data.

3) Integrated Stack : The chip, the AI models, and the API layer are co-designed to work together. A competitor replicating only one layer — say, building a competing API — would be doing so without the hardware signal quality or the proprietary training data that makes our outputs reliable.

Where We Are

✅ Attention Chip — Demonstrated at CES 2026. Core hardware working and validated.

✅ Core Signal Engine — Complete. Real-time eye signal capture and structuring operational.

🔵 Attention Intelligence API — In Development · 2026.

🔵 Attention Dataset v1 — In Development · 2026.

⬜ OEM Embedded Program — Planned · 2027.