The Intelligence Layer between Your AI and the Human in front of it

The Intelligence Layer Between Your AI and the Human in Front of It.

AI systems are getting smarter. But most of them are still blind to the most important variable in any human-machine interaction: the state of the person using them.

Is the driver paying attention? Is the XR user cognitively overloaded? Is the robot operator fatigued?

Without that signal, AI systems guess. With Attentix, they know.

The Attentix Platform is the software layer that sits between our Attention Chip hardware and your AI system — converting raw eye signals into structured, real-time human-state intelligence that any AI application can consume immediately.

What the Platform Does — In Plain Terms

You do not need to build eye-tracking models. You do not need to manage raw video pipelines. You do not need to handle biometric data compliance.

You call the API. You get the intelligence. Your AI acts on it.

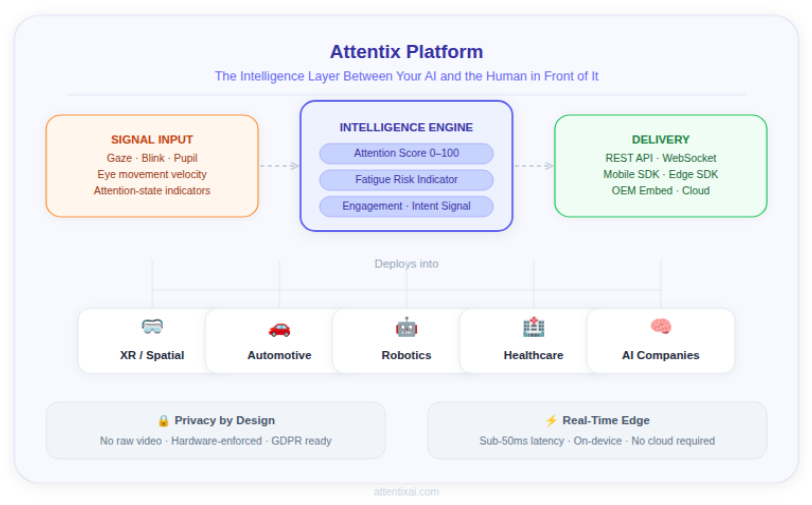

Platform Architecture

Layer 1 — Signal Input

Clean, structured data in. Every time.

The platform accepts real-time eye signals from the Attentix Attention Chip or compatible camera inputs and converts them into normalized, machine-readable streams — before any AI inference happens.

This normalization step is critical. Inconsistent or noisy input data is the primary reason eye-tracking and attention AI systems fail in production. The Attentix Signal Input Layer solves this at the source.

Supported Inputs

- Attentix Attention Chip (primary — highest signal quality)

- RGB camera input

- IR / depth camera input

- Single-eye or binocular capture

- Wearable device sensor streams

- Time-synchronized behavioral signal feeds

Primary Signals Captured

- Gaze direction and fixation points

- Blink events and blink rate

- Pupil dynamics and dilation

- Eye movement velocity and saccades

- Presence and attention-state indicators

Why This Matters

Standard camera inputs are noisy, environment-dependent, and computationally expensive to process. The Attentix chip-first input pipeline delivers cleaner signals at lower compute cost — which means more reliable intelligence downstream and lower infrastructure overhead for your system.

Layer 2 – Intelligence Engine

Signals become human understanding.

This is the core of the Attentix Platform. Purpose-built AI models transform low-level eye signals into high-level human-state outputs — in real time, continuously, at production scale.

Core Model Functions

- Eye-region detection and precise tracking

- Temporal sequence modeling for behavioral pattern recognition

- Attention-state estimation and classification

- Fatigue onset detection and severity scoring

- Distraction event detection

- Context fusion and multi-signal confidence scoring

- Edge-optimized quantized model execution

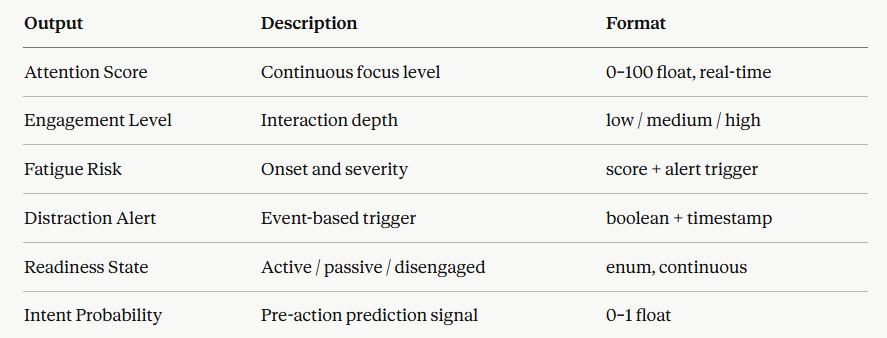

What Your AI System Receives

These outputs require no additional model training on your end. They are labeled, structured, and immediately consumable by any downstream AI system, inference pipeline, or application logic.

Layer 3- Deployment

Integrate once. Deploy anywhere.

Attentix is designed to fit into existing AI architectures with minimal engineering overhead. The platform supports multiple integration patterns and deployment modes — from single-device mobile apps to large-scale fleet deployments.

Integration Options

- REST API — Standard request-response for event-driven integrations

- WebSocket Streaming — Continuous real-time intelligence stream for live systems

- Mobile SDK — iOS and Android, optimized for on-device deployment

- Edge SDK — Embedded deployment for XR, automotive, and robotics hardware

- Cloud Analytics Pipeline — Centralized aggregation and dashboard integration

- Enterprise Connectors — Custom integration for enterprise system requirements

Deployment Modes

- On-device inference — Full processing on the edge device, no network dependency

- Hybrid edge + cloud — Edge inference with cloud aggregation and analytics

- Centralized analytics mode — Fleet-scale monitoring with centralized dashboards

- OEM embedded deployment — Deep hardware integration for manufacturer partners

Supported Languages

- Python

- JavaScript / TypeScript

- Swift

- Kotlin

- C++

Platform Foundations

These are the engineering principles that define every decision in how the Attentix Platform is built.

Real-Time Inference

Attention signals are only valuable if they arrive fast enough to act on. The Attentix Platform is built for sub-50ms end-to-end latency from signal capture to structured output delivery — fast enough for safety-critical applications, interactive systems, and live AI response.

This is not achievable with cloud-round-trip architectures. It requires on-device processing — which is why the Attention Chip is central to the platform, not optional.

Temporal Modeling

Human attention is not a snapshot — it is a continuous, dynamic state. A single eye position reading tells you very little. A sequence of readings over time tells you whether someone is drifting toward fatigue, recovering focus, or about to disengage.

The Attentix Intelligence Engine uses sequence-aware temporal models that track attention state over time — not just frame by frame. This is what makes outputs like fatigue onset detection and intent prediction possible.

Edge-Optimized Architecture

The platform is designed to run efficiently in resource-constrained environments. Models are quantized for edge deployment, the chip handles signal preprocessing, and the inference stack minimizes CPU/GPU dependency.

This means Attentix works in the places where human-state intelligence matters most: XR headsets, vehicle embedded systems, robotics platforms, and mobile devices — all without requiring cloud infrastructure for core intelligence functions.

Privacy by Design

Attentix does not transmit raw eye images, facial video, or biometric data. The chip structures signals at the source. Only numerical outputs — gaze vectors, blink events, attention scores — move through the platform.

This is not a software policy. It is enforced by the hardware architecture. Your system receives human intelligence, not human data.

For AI teams navigating GDPR, EU AI Act biometric provisions, CCPA, and automotive or healthcare data regulations — this architecture eliminates a significant compliance burden at the integration point.

Scalable from One Device to Fleet

The same platform stack that runs on a single XR headset scales to fleet-wide deployment across thousands of vehicles, devices, or users. Multi-tenant backend support, versioned APIs, and centralized analytics modes are built in — not bolted on.

Who the Platform Is Built For

AI Companies and Model Teams

You are building AI systems that need to understand human context — but you do not want to build and maintain the eye-tracking and attention inference stack yourself. Attentix gives you production-ready human-state outputs via API, so your team focuses on what you are building, not the perception layer underneath it.

XR and Spatial Computing Teams

Gaze-aware AI is a core requirement for next-generation XR experiences. Attentix integrates with Snapdragon XR, Android XR, and other leading XR platforms — providing the attention intelligence layer that makes adaptive, context-aware spatial computing possible.

Automotive Software and ADAS Teams

Driver Monitoring Systems (DMS) are becoming mandatory across major markets. Attentix provides the real-time fatigue, distraction, and readiness signals your ADAS or DMS system needs — structured, low-latency, and deployable in embedded automotive environments.

Robotics and HRI Teams

Robots that work alongside humans need to understand human state — not just human position. Attentix gives your robot the ability to detect when a nearby human is distracted, fatigued, or disengaged — enabling safer and more natural human-robot interaction.

Healthcare and Clinical Teams

Cognitive assessment, fatigue monitoring, and attention-based clinical indicators require precise, continuous, and privacy-respecting eye data. Attentix provides the intelligence layer for clinical and wellness applications that need reliable human-state signals at scale.

Platform Status and Roadmap

✅ Signal Input Layer — Complete. Operational with Attention Chip hardware.

✅ Core Intelligence Engine — Complete. Real-time outputs validated at CES 2026.

🔵 REST API / WebSocket — In Development · 2026.

🔵 Mobile SDK (iOS / Android) — In Development · 2026.

🔵 Edge SDK — In Development · 2026.

⬜ Enterprise Connectors — Planned · 2027.

⬜ Fleet Analytics Dashboard — Planned · 2027.