Three Products.

One Intelligence Stack.

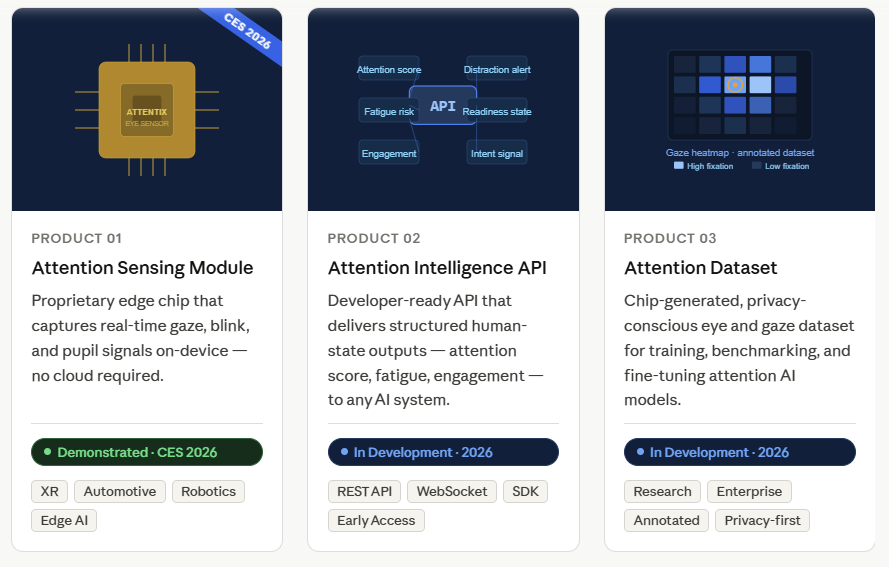

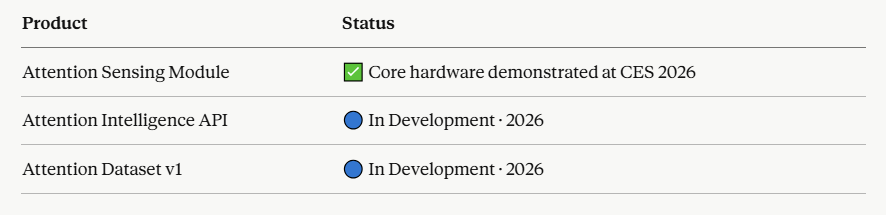

Attentix is building a modular product stack on top of a working hardware foundation. Our Attention Chip was demonstrated at CES 2026 and the core signal engine is operational. The products below represent the full commercial stack we are bringing to market — each at a different stage of development..

Where Each Product Stands Today

1. Product 01 : Attention Sensing Module

Capture high-value human signals at the source.

Status: Core Hardware Demonstrated at CES 2026

The Attention Sensing Module is the hardware foundation of the Attentix stack. Built around our proprietary Attention Chip, it captures real-time gaze, blink, pupil, and behavioral signals directly at the edge — without relying on cloud pipelines or heavy GPU infrastructure.

This is not a concept. The chip was demonstrated live at CES 2026, processing real-time eye data on-device with low latency and minimal power consumption.

What It Captures

- Gaze direction and fixation points

- Blink events and blink rate

- Pupil dynamics and dilation

- Eye movement velocity

- Behavioral attention indicators

Why It Matters

Most AI systems that need eye data rely on raw camera feeds and cloud processing. This creates latency, privacy risks, and high infrastructure cost. The Attention Sensing Module solves this by structuring the data at the source — on the device, in real time.

Ideal For

- XR hardware manufacturers

- Automotive OEM systems

- Robotics platforms

- Smart device integrations

- Edge AI deployments

Availability

The core hardware has been demonstrated. We are currently engaging with OEM and hardware partners for integration and pilot programs.

→ Interested in integrating the module? Contact us to discuss partnership options.

2. Product 02 : Attention Intelligence API

Turn signals into actionable intelligence.

Status: In Development · Expected 2026

The Attention Intelligence API is the software layer of the Attentix stack. It takes structured signals from the Attention Sensing Module — or compatible camera inputs — and transforms them into real-time human-state outputs that any AI system, application, or platform can consume.

We are building this for AI developers, product teams, and enterprise systems that need to understand human attention without building the underlying models themselves.

What It Delivers

- Attention score (0–100, continuous)

- Engagement level

- Fatigue risk indicator

- Distraction alert

- Readiness state

- Intent probability signal

How It Works

Structured eye signals go in. Human-state intelligence comes out. No raw video. No heavy preprocessing. No cloud dependency required.

Integration Options

- REST API

- WebSocket streaming

- Mobile SDK (iOS / Android)

- Edge SDK

- Enterprise connectors

Ideal For

- AI companies building perception layers

- SaaS platforms requiring user-state awareness

- Automotive and XR software teams

- Healthcare monitoring applications

- Enterprise productivity and safety systems

Availability

Currently in development. We are accepting applications for our Early Access Program. Early access partners will receive priority API access, integration support, and the opportunity to shape the product roadmap.

→ Apply for Early Access

3. Product 03 : Attention Dataset

Train and validate attention models at scale.

Status: In Development · Expected 2026

The Attention Dataset is a structured, privacy-conscious collection of gaze and behavioral signals designed for AI teams building or improving human-attention models. It is generated using our Attention Chip hardware — meaning the data reflects real-time, high-fidelity signals captured at the edge, not simulated or synthetic approximations alone.

This dataset exists because high-quality, labeled eye-tracking and attention data is one of the scarcest resources in AI development today. Most teams building attention-aware models either collect their own data at high cost or rely on limited public datasets that do not reflect real-world conditions.

What It Includes

- Annotated gaze and fixation data

- Blink event sequences

- Pupil dynamics under varied conditions

- Behavioral attention labels

- Structured metadata per sample

- Versioned dataset releases

Data Standards

- Privacy-conscious collection and processing

- Consent-based data sourcing

- Edge-captured signals for real-world fidelity

- Structured annotation format

Use Cases

- Training attention and gaze models

- Benchmarking existing model performance

- Fine-tuning foundation models for human-state tasks

- Simulation and synthetic data validation

- Academic and applied research

Licensing Options

- Research licensing

- Commercial / enterprise licensing

- Custom data programs for specific use cases

Availability

Currently in development. Research and enterprise partners can apply now to be included in our early dataset program.

→ Apply for Dataset Access

Why the Three Products Work Together

Most companies building human-aware AI face a fragmented stack problem. They source hardware from one vendor, build models on incomplete datasets, and integrate APIs that were not designed for their deployment environment.

Attentix solves this by offering a connected stack:

The Attention Sensing Module captures the signal. The Attention Intelligence API turns it into meaning. The Attention Dataset trains the next generation of models.

Each product can be adopted independently. Together, they form the fastest path to deploying human-state intelligence in a real-world system.

Commercial Models

Attentix products are designed to support multiple deployment and revenue structures:

- Embedded OEM partnerships

- Hardware module sales and OEM licensing

- API subscription (usage-based and enterprise tiers)

- Dataset research and commercial licensing

- Custom integration and pilot programs